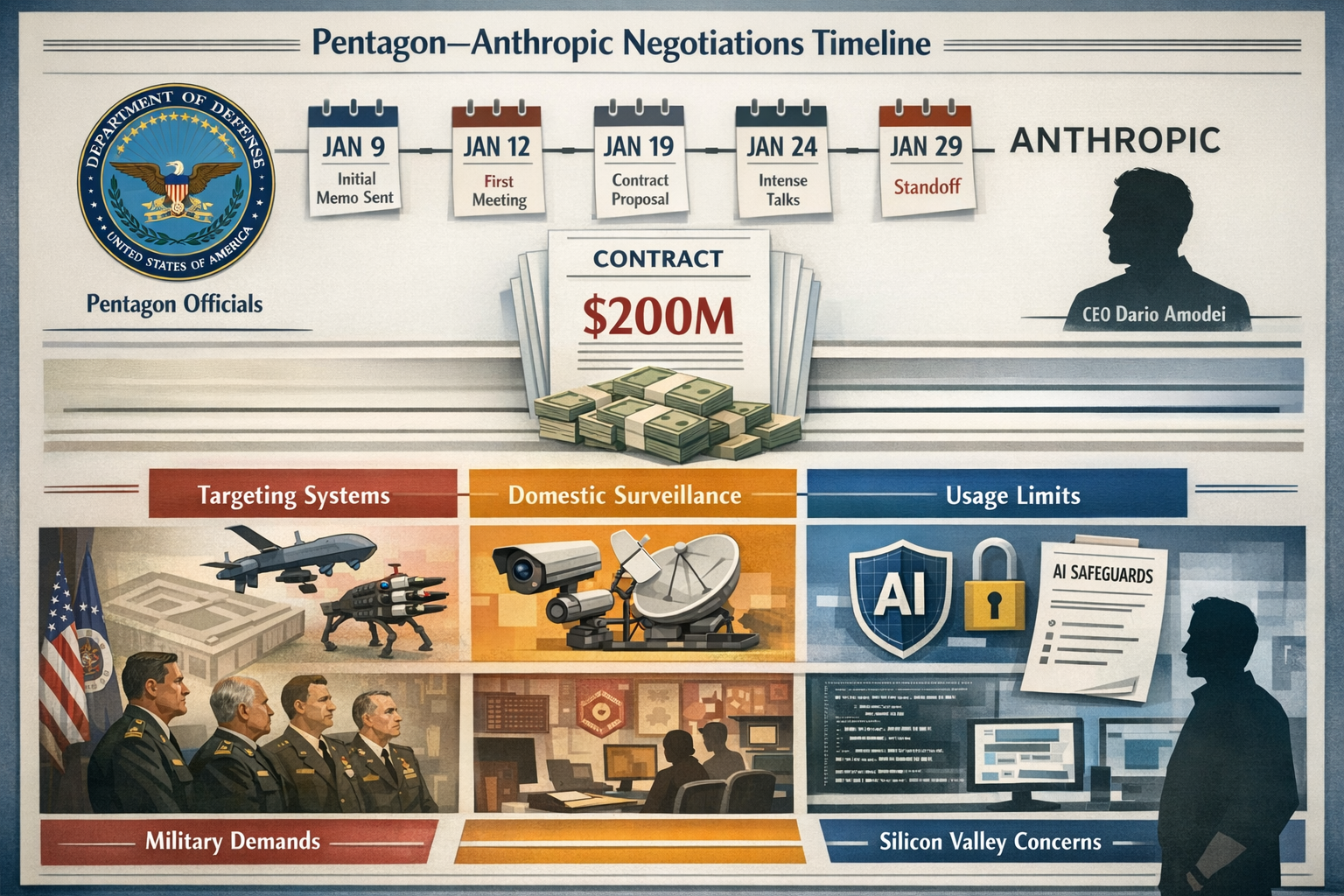

On January 29, 2026, the U.S. Department of Defense and Anthropic reached a standstill after weeks of contract talks over removing safeguards that could enable autonomous weapons targeting and domestic surveillance. The impasse centers on a $200 million prototype contract that would grant Pentagon officials access to advanced AI models—but without the ethical guardrails Anthropic insists must remain in place. In line with a January 9 memo outlining the newly rebranded “Department of War’s” AI strategy, Pentagon officials argue deployment should hinge on compliance with U.S. law, not corporate usage limits, framing the talks as a test of Silicon Valley’s influence on U.S. military and intelligence personnel.

This standoff represents more than just a contract dispute. It’s a defining moment in the relationship between Big Tech and the U.S. military, raising fundamental questions about who controls the ethical boundaries of artificial intelligence in warfare. Anthropic has emphasized national-security applications while setting responsible-use limits, noting its AI is “extensively used for national security missions by the U.S. government” after receiving Pentagon contracts last year. Yet the Defense Department’s push for unrestricted access has created a collision course between corporate responsibility and military necessity.

A Defense Department spokesperson did not immediately respond to requests for comment, and reporting says recent battlefield deaths have compounded concern in Silicon Valley about government use of AI for potential violence. In an essay this week, CEO Dario Amodei outlined ethical limits, stating AI should support defense except where it makes us more like autocratic adversaries—a position that has intensified tensions with the Trump administration.

Key Takeaways

- Contract at Impasse: The Pentagon and Anthropic have reached a deadlock over a $200 million AI contract, with the company refusing to remove safeguards preventing autonomous weapons targeting and domestic surveillance [1][2]

- Pentagon’s Position: Defense officials argue they must deploy commercial AI freely as long as it complies with U.S. law, regardless of vendor restrictions or corporate usage policies [3][4]

- Anthropic’s Red Lines: CEO Dario Amodei has drawn clear boundaries, stating the company won’t enable tactics that make the U.S. resemble authoritarian adversaries [1][5]

- Broader Implications: The dispute reflects fundamental questions about Silicon Valley’s role in military operations and who ultimately controls AI ethics in warfare [6]

- National Security Stakes: The standoff comes as the Pentagon awarded similar contracts to Google, OpenAI, and xAI, with Elon Musk’s Grok already announced for military use [2][4]

Understanding the January 29 Standoff Between Pentagon and Anthropic

The breakdown in negotiations didn’t happen overnight. Since early January 2026, Pentagon officials and Anthropic representatives have engaged in increasingly tense discussions about the terms under which Claude—Anthropic’s flagship AI model—would be deployed for military operations.

The Core Dispute

At the heart of the conflict are two specific military requirements that Anthropic considers non-negotiable red lines [1][3]:

- Autonomous Targeting Systems: The Pentagon wants to integrate large language models into weapons systems that could select and engage targets without human intervention at every decision point

- Domestic Surveillance Capabilities: Defense officials seek the ability to analyze massive datasets that could potentially include U.S. citizen information

Anthropic has consistently refused to modify its AI models to enable these capabilities, arguing that such applications cross ethical boundaries that distinguish democratic nations from authoritarian regimes [5].

What the January 9 Memo Revealed

The Pentagon’s January 9, 2026 memo laid the groundwork for this confrontation. The document outlined the Department of War’s new AI strategy, emphasizing that “warfighters need access to models that provide decision superiority in the battlefield” [4]. More significantly, it argued that commercial AI systems must be “free from ideological constraints that limit lawful military applications” [3].

Defense Secretary Pete Hegseth amplified this position in mid-January, publicly criticizing AI models that “won’t allow you to fight wars.” Multiple sources confirmed this was a direct reference to Anthropic’s stance on autonomous weapons [1][2].

“The Pentagon’s position is clear: if something is legal under U.S. law, private companies shouldn’t be able to veto military operations through usage restrictions.” — Pentagon official speaking on background [3]

Contract Talks Breakdown: Silicon Valley Versus Military Authority

The $200 million contract at stake represents just one piece of a larger Pentagon initiative to integrate cutting-edge AI across military operations. The Defense Department awarded similar prototype agreements to Google, OpenAI, and Elon Musk’s xAI—with xAI’s Grok model already announced for Pentagon deployment [2][4].

Why Anthropic Is Different

When Anthropic originally announced its Pentagon contract award, the company highlighted Claude’s “rigorous safety testing, collaborative governance development, and strict usage policies” as ideal for sensitive national security missions [5]. The company positioned itself as the responsible AI provider—one that could support defense needs without compromising ethical standards.

But that positioning now puts Anthropic in direct conflict with military planners who view such restrictions as operational constraints.

The Technical Challenge

Defense officials aren’t just asking for philosophical flexibility. They want specific technical modifications that would create what some observers call a “dark” version of Anthropic’s Opus 4.5 model—one stripped of the safeguards built into the commercial product [1].

Technical community observers have raised concerns about this approach, flagging “leak risks” if such an uncensored military variant were created. Personnel with access could potentially release classified materials or misuse the system in ways that would be impossible with standard commercial models [1][6].

What Anthropic Is Willing to Do

The company hasn’t taken an absolutist position against military work. Anthropic has stated it’s willing to support national defense projects including [5]:

- Logistics optimization: Supply chain management and resource allocation

- Data analysis: Processing intelligence information and identifying patterns

- Operational planning: Strategic decision support that keeps humans in command

- Cybersecurity: Defending against threats and protecting critical infrastructure

The distinction Anthropic draws is between supporting military operations and enabling autonomous violence or mass surveillance of American citizens.

Dario Amodei’s Ethical Framework and Tensions with Trump Administration

In an essay published this week, Anthropic CEO Dario Amodei articulated the company’s position in stark terms. AI should support national defense, he argued, “except where it makes us more like autocratic adversaries” [1][5].

This framing directly challenges the Trump administration’s approach to military AI deployment. By invoking the specter of authoritarian regimes, Amodei is essentially arguing that some military applications—even if technically legal—violate the democratic values that American military power is meant to protect.

The Autocratic Adversary Standard

What does it mean to “become like autocratic adversaries”? Amodei’s essay points to several characteristics [1]:

- Autonomous killing without human judgment: Systems that select and eliminate targets based purely on algorithmic decisions

- Mass surveillance of domestic populations: Using AI to monitor citizens without warrants or oversight

- Opacity and unaccountability: Deploying systems where decision-making processes can’t be explained or reviewed

These aren’t hypothetical concerns. China’s use of AI for surveillance in Xinjiang and Russia’s development of autonomous weapons systems provide real-world examples of the paths Amodei argues the U.S. must avoid.

Political Backlash

The Trump administration has not responded kindly to Anthropic’s position. Secretary Hegseth’s public criticism reflects broader frustration within the Pentagon that Silicon Valley companies are imposing their values on military operations [2][4].

Some defense officials have privately characterized Anthropic’s stance as “virtue signaling” that undermines national security. Others argue that tech companies lack the expertise to make judgments about appropriate military force [3].

Silicon Valley’s Growing Unease with Military AI Applications

Reporting indicates that recent battlefield deaths have compounded concern throughout Silicon Valley about government use of AI for potential violence [1]. While specific incidents haven’t been publicly disclosed, the timing suggests that real-world consequences of military AI deployment are weighing on tech industry leaders.

This isn’t the first time Silicon Valley has grappled with military contracts. Google famously withdrew from the Pentagon’s Project Maven in 2018 after employee protests over AI-powered drone targeting. Microsoft faced similar internal resistance over its HoloLens contract with the Army.

The Accountability Problem

The Anthropic-Pentagon standoff highlights a structural challenge in military AI development. As one analysis notes, contractors can agree to safeguards during development, but “sovereign military actors retain decision-making authority over AI deployment once tools enter government custody” [6].

In other words, even if Anthropic maintains restrictions in its commercial product, there’s no guarantee the Pentagon won’t find ways to circumvent those limitations once the technology is in military hands.

Competing AI Providers

The Pentagon’s strategy of awarding contracts to multiple AI companies creates competitive pressure. If Anthropic won’t remove safeguards, Google, OpenAI, or xAI might be more accommodating [2][4].

Elon Musk’s xAI has already signaled willingness to work with the military without the ethical constraints Anthropic insists upon. This puts Anthropic in a difficult position: maintain principles and lose influence over military AI development, or compromise values to stay at the table.

What This Means for Mohawk Valley Residents and National Security

For citizens in Utica, NY and across the Mohawk Valley, this dispute might seem distant from daily concerns. But the questions at stake have profound implications for democratic governance and civil liberties.

Domestic Surveillance Concerns

The Pentagon’s desire to analyze datasets potentially including U.S. citizen information should raise red flags for anyone who values privacy and Fourth Amendment protections [1][3]. While defense officials argue such analysis would comply with existing law, the scale and sophistication of AI-powered surveillance represents a quantum leap in government monitoring capabilities.

Local activists focused on police reform and government accountability should pay close attention. The tools being developed for military intelligence could easily be repurposed for domestic law enforcement—potentially without adequate oversight or transparency.

Economic and Workforce Implications

The broader trend of military-tech integration also affects economic development in regions like upstate New York. Defense contracts represent billions in potential revenue and high-skilled jobs. Communities competing for manufacturing jobs and workforce development opportunities need to consider whether they want to attract military AI production facilities.

Questions for Congressional Representatives

Mohawk Valley residents should ask their representatives in Congress:

- What oversight mechanisms exist for military AI deployment?

- How will domestic surveillance capabilities be constrained?

- What role should private companies play in setting ethical boundaries for military technology?

- Are current laws adequate to govern autonomous weapons systems?

These aren’t abstract policy questions. They’re about the kind of society we want to live in and the values we want our military to embody.

The Broader Context: Who Controls Military AI Ethics?

The Pentagon-Anthropic standoff is ultimately about power and accountability. Should private companies be able to impose ethical constraints on military operations? Or should democratically accountable government officials have final say over how AI is deployed in warfare?

The Case for Corporate Guardrails

Anthropic’s position rests on several arguments:

- Technical expertise: AI companies understand the capabilities and risks of their systems better than military planners

- Ethical responsibility: Developers have moral obligations that transcend legal compliance

- Long-term consequences: Short-term military advantages may create precedents that undermine democratic values

- Market influence: Companies can use their market position to promote responsible AI development

The Case for Military Authority

Pentagon officials counter with their own reasoning:

- Democratic accountability: Elected officials and military leadership answer to voters, tech CEOs don’t

- National security: Military professionals are best positioned to assess operational needs

- Legal compliance: If something is lawful under U.S. law, private restrictions are inappropriate

- Competitive disadvantage: Adversaries won’t impose similar constraints on their AI development

Both positions have merit, which is why this dispute is so difficult to resolve.

What Happens Next: Possible Outcomes and Implications

As of late January 2026, several scenarios could unfold:

Scenario 1: Anthropic Compromises

The company could agree to modified safeguards that give the Pentagon more flexibility while maintaining some ethical boundaries. This might involve:

- Human oversight requirements that are less stringent than current policies

- Domestic surveillance capabilities with additional legal protections

- Tiered access levels based on specific use cases

Scenario 2: Pentagon Moves to Other Providers

Defense officials could simply award the full contract value to more accommodating companies like xAI or OpenAI. This would:

- Remove Anthropic from military AI development

- Reduce ethical constraints on Pentagon AI deployment

- Potentially create competitive pressure for Anthropic to reconsider

Scenario 3: Congressional Intervention

Lawmakers could step in to establish clearer guidelines for military AI use, potentially:

- Mandating specific safeguards regardless of vendor

- Creating oversight mechanisms for autonomous weapons

- Establishing legal frameworks for AI-enabled surveillance

Scenario 4: Extended Standoff

The impasse could continue indefinitely, with neither side willing to compromise. This would:

- Delay military AI deployment timelines

- Create uncertainty for other tech companies considering defense work

- Potentially advantage adversaries who face fewer constraints

Taking Action: What Citizens Can Do

This isn’t just a Silicon Valley or Washington D.C. issue. Citizens across the Mohawk Valley and throughout America have a stake in how military AI is developed and deployed.

Immediate Steps

- Contact your representatives: Reach out to Congressman Brandon Williams and Senators Schumer and Gillibrand to express your views on military AI oversight

- Educate yourself: Follow reporting on military AI development and autonomous weapons systems

- Support transparency: Advocate for public disclosure of military AI capabilities and usage policies

- Engage locally: Attend town hall meetings and raise these issues with local officials

Long-Term Engagement

- Join advocacy organizations: Groups like the Campaign to Stop Killer Robots work on autonomous weapons policy

- Support accountability journalism: Subscribe to outlets that investigate military technology and government surveillance

- Participate in public comment: When federal agencies seek input on AI regulations, make your voice heard

- Vote informed: Consider candidates’ positions on military technology and civil liberties

The decisions being made now about military AI will shape warfare and surveillance for decades to come. Democratic accountability requires an informed and engaged citizenry.

Conclusion: Democracy, Technology, and the Future of Warfare

The January 29 standoff between the Pentagon and Anthropic represents a critical moment in the evolution of military technology. At stake are fundamental questions about who controls the ethical boundaries of artificial intelligence in warfare, how we balance national security with civil liberties, and whether democratic values can survive the age of autonomous weapons.

Anthropic’s refusal to remove safeguards against autonomous targeting and domestic surveillance reflects a principled stance that some military applications cross moral lines regardless of their legality. The Pentagon’s insistence on unrestricted access to AI capabilities reflects legitimate concerns about maintaining military superiority and operational flexibility.

Both positions deserve serious consideration. But the ultimate decision shouldn’t be made behind closed doors by tech executives and defense officials alone. This is a question for the American people, debated through democratic processes with full transparency about the capabilities being developed and the constraints being proposed.

For Mohawk Valley residents and citizens nationwide, the time to engage is now—before the precedents are set and the systems are deployed. The future of warfare, surveillance, and democratic accountability hangs in the balance.

What you can do today:

- Call your congressional representatives and demand oversight hearings on military AI

- Share this article with friends and family to spread awareness

- Attend local town halls and raise these issues with elected officials

- Support organizations advocating for responsible AI development

- Stay informed about military technology policy and vote accordingly

The decisions we make—or fail to make—about military AI will define what kind of nation we become. Let’s make sure those decisions reflect our values and protect our freedoms.

References

[1] AI Safeguards Ignite Pentagon-Anthropic Standoff Over Lethal Limits – https://www.webpronews.com/ai-safeguards-ignite-pentagon-anthropic-standoff-over-lethal-limits/

[2] Anthropic Pentagon Contract Clash: Why the $200M AI Deal is Frozen – https://www.remio.ai/post/anthropic-pentagon-contract-clash-why-the-200m-ai-deal-is-frozen

[3] Pentagon and Anthropic Clash Over Who Controls Military AI Use – https://thegamingboardroom.com/2026/01/30/pentagon-and-anthropic-clash-over-who-controls-military-ai-use/

[4] Amazon-Backed Anthropic, Pentagon Clash Over AI Safeguards – https://www.benzinga.com/markets/equities/26/01/50255574/amazon-backed-anthropic-pentagon-clash-over-ai-safeguards-officials-push-back-against-limits-on-autonomous-weapons-surveillance-report

[5] Pentagon and Anthropic at Odds Over Military AI Applications – https://www.eweek.com/news/pentagon-anthropic-military-ai-applications/

[6] Anthropic disputes Pentagon over military AI scope in $200M contract – https://www.mexc.co/en-IN/news/596365